How (not) to win CodeChef Long Challenge

I have finished 5th in CodeChef Long Challenge in January 2014. It's pretty good position considering that over 4,000 people took part in the contest. This post will show you how to get in the top 10 list. I will also try to do some post-mortem analysis of why the first place wasn't mine.

I like to win. I didn't know I like it until I started winning. Besides the good feeling, high ranking on a global competition looks really good on my CV. Imagine job interviewer reading your CV and there's your university degree. Mhm, says the interviewer. Then he looks down and pauses... Top 10 in global competition? Wow! :-) Okay, it's not always that impressive, but earning a top 10 ranking in global competition is cheaper and creates higher wow effect than university degree. Think about it.

Okay, so you would like to win. How do you go about it? The first thing to notice is that all the winners always solved all 10 problems in the contest. Usually around 10-30 players get all 10 problems right in any given contest. It means that just by solving all 10 problems you are already securing high ranking for yourself.

The problems are all solvable using information freely available on the Internet. Mostly it's graph theory, search trees, Conway's theory of games, combinatorics, and the usual dynamic programming and greedy algorithms. Basics of these theories are taught routinely at universities and at various courses. But the basics aren't enough. You will have to do some research.

Often all the necessary information is in a couple Wikipedia articles. Sometimes you can find various useful bits on other sites or in various PDFs published by university teachers. Search is your friend. Browse around looking for keywords. Once you have the right keywords, it is usually easy to find the algorithm you need.

If you participated in the contest previously and you feel a déjà vu when looking at some problem, look up editorials for similar problems from past contests. Chances are the new problem is just a variation of the old one.

I don't always follow the theory though. Oftentimes it is easier to reinvent my own algorithm than to find existing one.

Look at the input limits. They tell you the expected complexity of the solution, because limits in CodeChef are always set to require optimal solution. The expected complexity class of the solution suggests the kind of algorithm that will be needed. High limits like N = 100,000 suggest O(NlogN) algorithm, which means some loops and maybe some tree, sorting, or similar algorithm. Lower limits will allow for polynomial solution. Very low limits like N = 10 suggest brute-force solution will be okay.

Another hint can be inferred from solutions of others. You see how many solutions there are, typical memory consumption, and running time. If the problem is solved by hordes of players, it's bound to have some simple solution that doesn't even need research. If some programs run in virtually no time, there's bound to be some very fast algorithm that will give the correct result. If memory consumption is high even in C++ solutions, you are supposed to use dynamic programming or some similar technique.

Another source of extra information is problem setter's psychology. Problem setters face the challenge of creating hard problems without having to be immortal geniuses themselves. The process usually involves taking some standard comp-sci problem with known algorithmic solution and obfuscating the problem using various logical or rhetoric transformations and perhaps layering some extra mathematical problems on top of the core problem. They will then dress the obfuscated problem in some random storyline.

You solve the problems by reversing this process. You first have to remove the story and formulate the problem as pure math detached from physical world. You then have to remove layers of obfuscation one by one. The point is to discover simpler inner problem that, when solved, will allow you to solve the outer problem. Once you get to the core, you either find an algorithm via research or develop your own.

Okay, so you get your algorithm finished, submit it and then... WA! Wrong Answer? You have of course tested your solution against the sample input in the problem statement and compared your output with the sample output. You have also weeded out some nasty output formatting bugs, which you have already discovered while attacking simpler problems. Most likely, you have a bug in your solution.

The way I go about it is to just write random test input generator. If my solution crashes on some input or generates obviously bogus output, I've already found the bug. If it doesn't crash, I write simplified solution using brute-force algorithm and then generate test output from the already generated test input using the brute-force algorithm. Cool, I now have both input and output for complete automated testing of my solution.

The trick here is to keep the brute-force solution super simple. It should follow the logic of the problem statement very closely, so that its correctness can be verified without testing just by reading the code. Lots of small test cases is usually all I need to remove bugs from my solution.

Okay, you don't get WA anymore. You get TLE instead. Uff, but at least there's a progress. The point here is that CodeChef test cases are always extreme. They are designed to explore worst cases in order to weed out solutions that aren't optimal in all situations. The way to go about it is to look at your solution and count loop iterations. Do you really have the big-O complexity you should have? Is the number of neighbors in the graph bounded? If not, you better handle them efficiently.

Oftentimes the big-O is right, but there's a huge constant due to some library call. Using MD5 checksums is convenient but slow, for example. I remove this overhead using profiler. Nothing special. I generate the largest possible test cases with various extreme properties. I then run my solution under profiler while feeding it these large test cases. It always helps.

You are reading this and thinking that this must be a lot of work! Yes, it is. Contrary to time-constrained competitions like the ones organized by Facebook and Google, CodeChef's Long Challenge is designed to take some time. On the positive side, it allows the problems to be harder. Only intelligence and persistence matter here. Speedy typing doesn't.

Chances are your spare time is scarce and you better invest it wisely. Sometimes this means going for the time-constrained competitions, but if you go for CodeChef Long Challenge, there are ways to save time. My time is extremely scarce, so I had to be smart about this. I have some good tips for you.

It's better to win one competition than to get mediocre results in many. So instead of participating regularly, select one contest and invest heavily in that one. You will need several days of your time morning-to-evening. Given enough time, every problem will yield to your pressure to solve it.

Another trick is to preview all the problems at the beginning of the competition and try to solve them only theoretically. You will be spared of coding, testing, debugging, optimizing, and writing helper programs. If you cannot solve all the problems with pencil and paper, you better skip this round of the contest. You can read editorials later, learn from them and try again later. This saves immense amounts of time. You will later discover that 1-3 of your theoretical solutions don't work. Don't worry. This happens to me all the time and I can always find an alternative solution that works.

Related to this is the idea of trying the problems in order of increasing difficulty. Sometimes you get stuck on some easy or medium difficulty problem. Don't skip it. If you cannot solve it, just give up on this round of the competition. Remember you have to solve all 10 problems in order to get into top 10.

And then there are standard productivity tips. Use C# or Java instead of C++. Scripting languages are not acceptable in practice, because they introduce huge constant into your program's computational complexity, which will cause your program to fail with TLE. Find quiet solitary environment that helps you focus. Keep working when you know what to do. Keep brainstorming when you are stuck. Also, don't grow complacent with your early results. The last problem you attack is the hardest one.

Okay, you have got all 10 problems, but one of them is special. Nine problems are scored with either 0 or 1 point depending on whether your results are correct. Challenge problem is scored on a continuous scale relative to other submissions. This is going to be tricky. The highest ranking players draw experience from several previous rounds and submit fairly sophisticated algorithms to the challenge problem. You can easily spend over 50% of your time on the challenge problem alone.

The basic strategy for attacking the challenge problem is "more is better". Test lots of ideas, combine as many as possible in your submission, and run as many iterations of your algorithm as you can fit within the time limit. You will need to generate a pool of test cases and to implement the scoring logic locally, so that you can evaluate your solutions quickly without submitting them.

There's an element of chance in the challenge problem. All players try a set of algorithms and some of them happen to stumble on better algorithms by pure chance while others are unlucky enough to spend their time trying dozens of ineffective algorithms. On the other hand, this might be just the case of not trying enough algorithms with enough diversity to locate the best one.

In January 2014, I submitted the most sophisticated solution by a wide margin. Yet I have been outperformed by as much as 30% by another player who submitted much simpler solution. Feels like bad luck, right?

The only substantial difference was that the winner used different edge sorting criteria than I did. I've tested several sorting criteria and selected the best one. I thought of the sorting criteria used by the winning solution, but I didn't have much time at the moment and dismissed that alternative as likely inferior to my existing solution. Bad move that cost me first place in the contest.

The point to learn here is that it is often better to test larger number of core algorithms instead of hurrying into fine-tuning of the best algorithm found in a quick comparison. Plausible arguments don't count. Only test results count. It's hard science.

The higher ranking players might have tried many more algorithms than I did, but they included only the most powerful ones in their submission. That could explain the relative simplicity of their solutions although pure chance is still a possibility.

There are several little tricks that help too. Other players executed the whole algorithm several times (as opposed to re-running individual steps). One should ideally use different algorithm in every run.

Another trick is to kill seeds. I have used constant seeds in my solution in order to make it easier to debug. The nature of CodeChef scoring system means that submitting the same solution multiple times effectively gives your solution more time to run as far as the solution is randomized with time-based seed.

I am bound to try again, but this time I am going to make it easier for myself. January 2014 was most likely the hardest round of CodeChef Long Challenge ever. It had record-breaking attendance with about 4,300 players participating in the competition. Several top performers took part in the contest. The tricky thing here is that not all rounds are that hard and some of them are easier to win than others. Cheating? No. Just strategy.

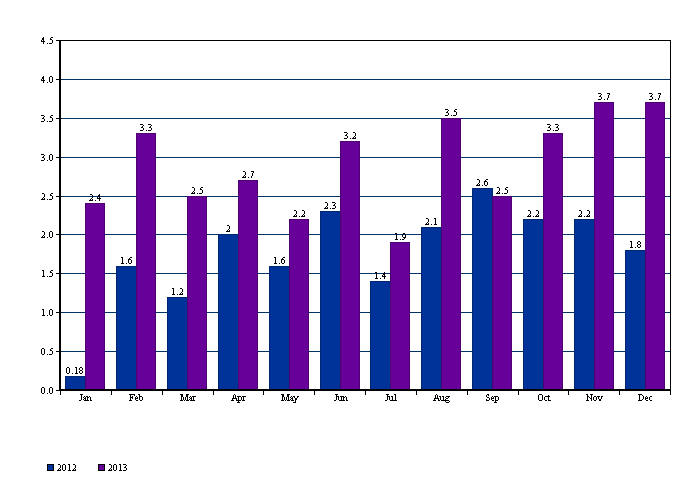

On the graph above, you can see monthly statistics of how many players have participated in that round. Obviously the popularity of CodeChef is growing, which means it will only get harder over time. But there are noticeable dips in March, May, and especially July. These are my target dates. I will try again in one of these months, hopefully getting much better chance to win.

Last note on CodeChef rank. Aside from position in particular contest, CodeChef also computes overall rank for all the contests you participated in. This rank is IMO useful only to regular players. If you participate only once, you likely did one test round before. This test round will pull your rank down, because the system assumes all players are fully invested in the game in every round. Even if you aren't burdened with such test rounds, the rank will take some time to climb. If you don't have time for multiple competitions, the rank is largely irrelevant.